Contribute Enterprise

Knowledge platform

AI-assisted upload, smarter reviews, and structured workflows for enterprise contribution.

Region

Hyderabad, Telangana

2024 - 2025

Product Type

Enterprise SAAS Platform

Industry

Enterprise Technology · Knowledge Management

The project itself :

Project Overview

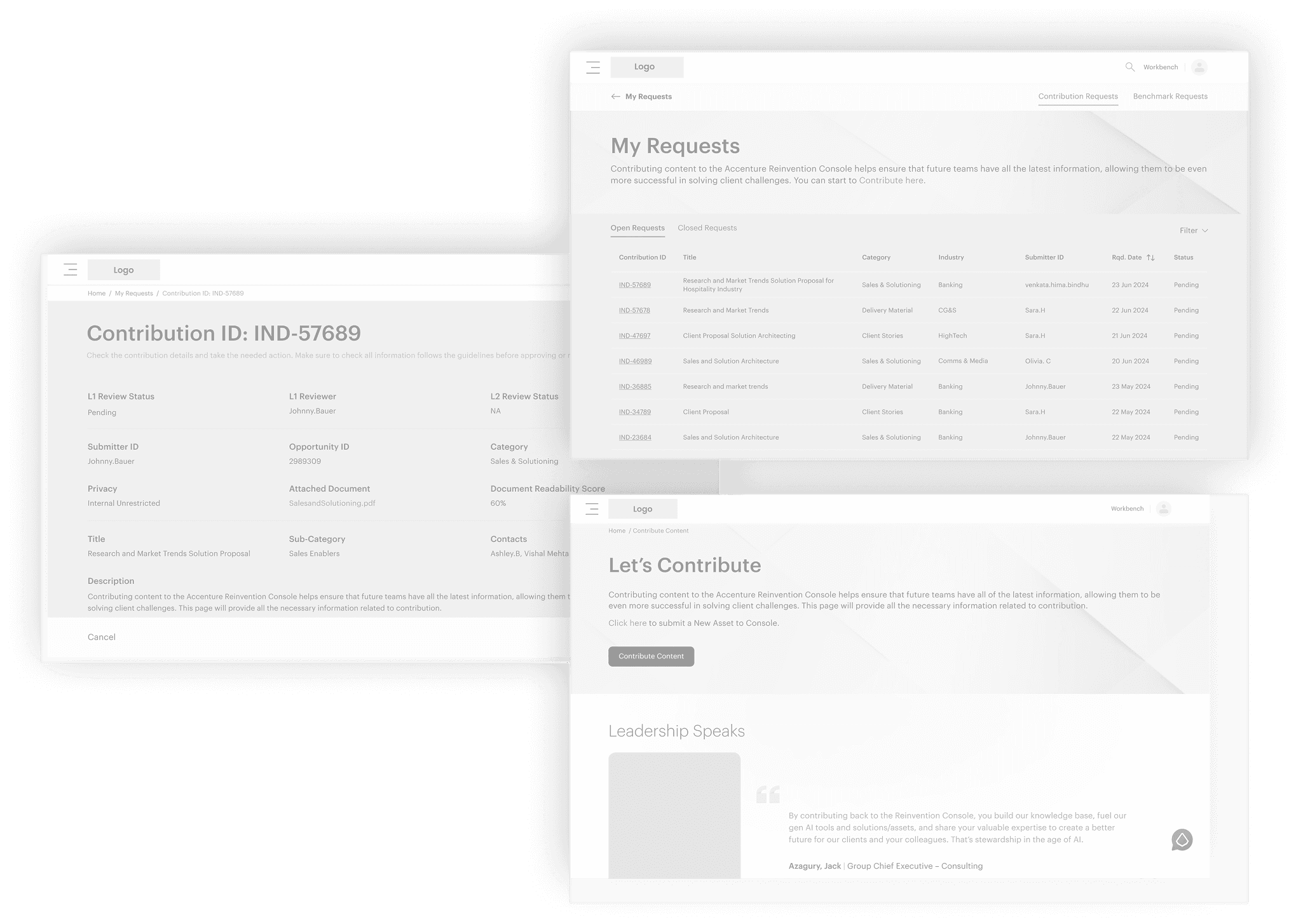

The Contribute platform is a knowledge-sharing module within the (ARC), an enterprise system used by business units to submit, review, and govern structured content at scale.

The platform enables contributors to upload knowledge assets, which are evaluated, categorized, and approved through a multi-level governance workflow.

This redesign focused on transforming the existing manual, form-heavy submission experience into a structured, AI-assisted workflow to improve clarity, compliance, and operational efficiency.

Problem:

The submission process relied on manual, unstructured inputs, leading to inconsistent categorization, governance gaps, and increased reviewer workload. As usage scaled, these inefficiencies slowed review cycles and reduced compliance clarity.

Goal:

The goal was to introduce a structured, AI-assisted submission workflow that improved governance consistency, reduced reviewer effort, and streamlined decision-making at scale.

My role:

UX Designer (End-to-End), supporting GenAI workflow integration.

Responsibilities:

Problem framing and workflow analysis

defined personas, User journey,

Restructuring information architecture

Lo-fi → Hi-fi Design,

GenAI Workflow Integration,

Reusable components,

Usability testing and Iterations.

Impact:

Reduced reviewer effort by ~60% (expected)

Improved metadata completeness across submissions

Enabled scalable L1/L2 review workflows with AI-assisted signals

Background Insight:

Historical Context

Before redesign, the system relied heavily on manual submission, metadata entry, classification, and routing. Over time, increased content volume exposed inefficiencies — inconsistent metadata, unclear review ownership, duplicated work, and slow turnaround.

This historical audit gave direction for a new, scalable contribution system.

Old Workflow Pain Points

Contributor

Manual Entry

Admin

Reviewer

Publish

Unclear Requirements

No Guidance

Time Consuming

Error prone

Routing Delays

Overloaded

Heavy categorization

inconsistent quality

Quality issues

Slow turnaround

why redesign:

Why this problem matters

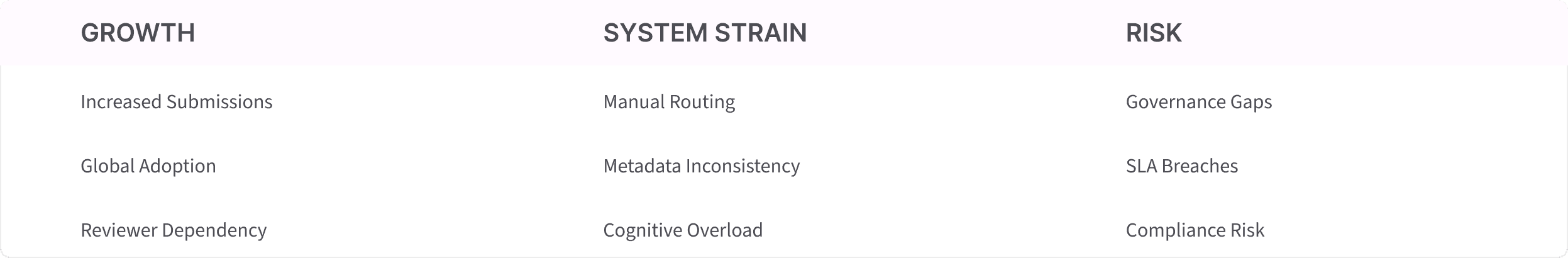

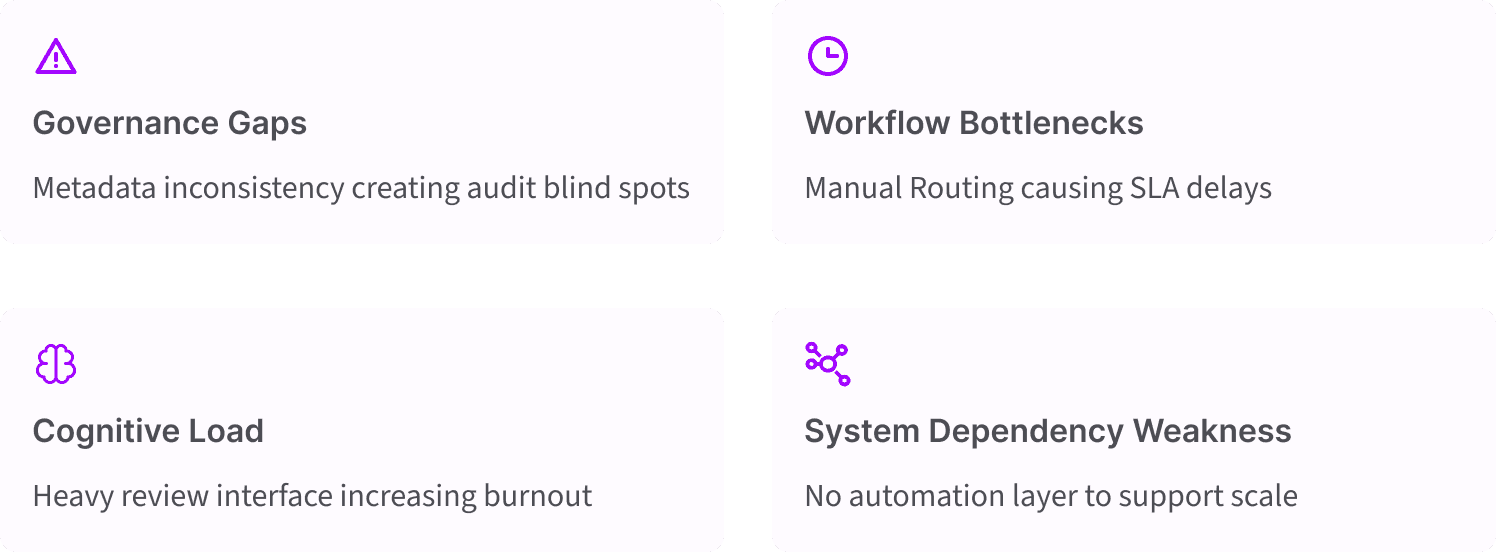

As submission volume increased globally, the existing workflow architecture began to show structural limitations.

Governance Gaps:

Inconsistent metadata tagging

No automated risk scoring

Lack of structured validation rules

Workflow Inefficiencies:

Manual L1 → L2 routing

High review turnaround time

Repeated back-and-forth between contributor and reviewer

Manual categorization efforts

Cognitive Overload:

Unstructured submission format

High correction frequency

Reviewer fatigue

This was not a cosmetic UX issue, it was a governance scalability problem within an enterprise knowledge system.

strategic diagnosis:

Problem Framing

Was this a usability issue or a governance breakdown at scale?

Stakeholder Ecosystem :

Contributor

Admin

L1 Review

L2 Review

Compliance

Business

Impacted KPI's :

Instead of immediately redesigning the interface, I adopted a hypothesis-driven approach to validate the root causes behind governance and workflow breakdowns.

This ensured that research efforts were focused, testable, and aligned with measurable business outcomes.

Hypothesis :

H1 : If we replace manual metadata entry with structured, guided inputs and AI-assisted categorization, governance consistency and audit traceability will improve by reducing classification errors and submission variability.

H2 : If we introduce automated routing logic based on submission type and metadata signals, reviewer workload will decrease and review turnaround time will improve due to reduced manual triaging.

H3 : If we restructure the submission flow into guided, step-based sections with contextual prompts, compliance clarity and submission quality will improve by reducing ambiguity and incomplete information.

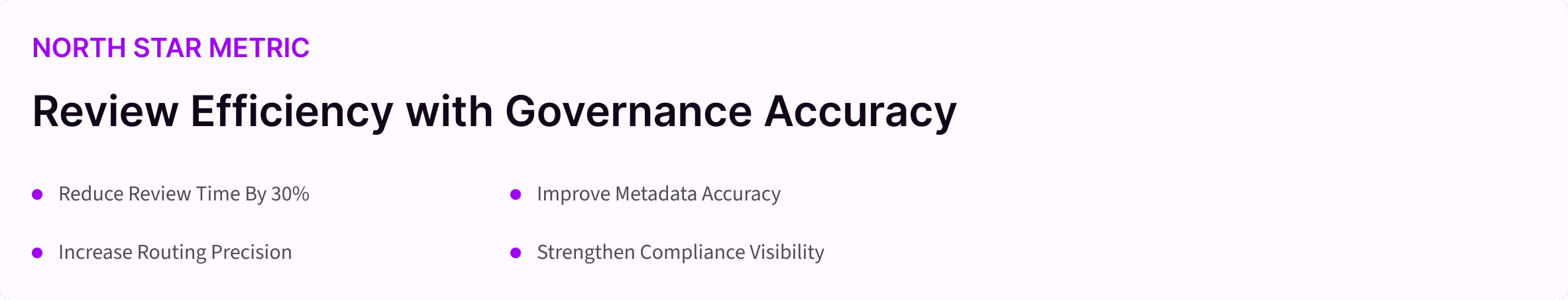

Before initiating deep research, I aligned with stakeholders on what long-term success would mean for the platform.

This ensured that hypothesis validation and solution exploration remained aligned with measurable product outcomes.

Research Approach

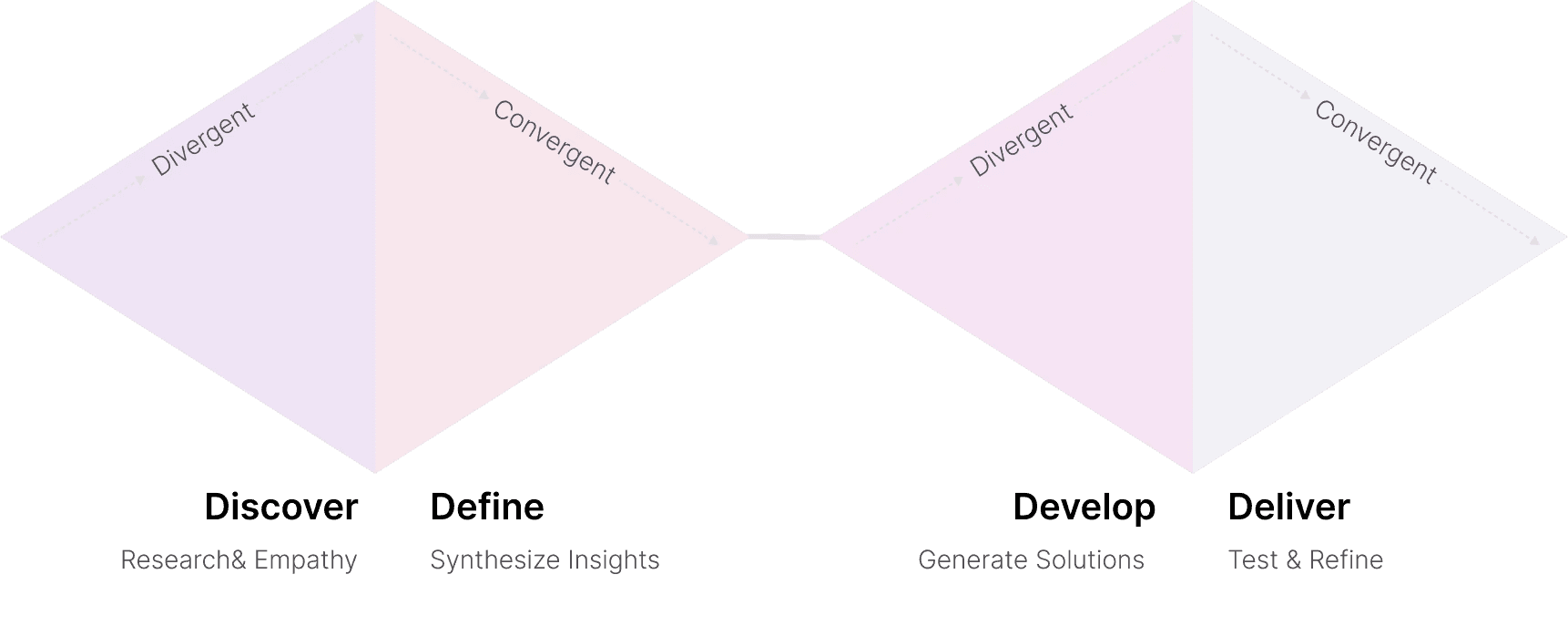

Double Diamond + Behavioral

why redesign:

Research Strategy

The research objective was to validate the root causes behind governance breakdown and workflow inefficiency at scale.

Specifically, I aimed to determine whether inefficiencies were caused by user behavior, structural workflow gaps, metadata inconsistencies, or routing logic limitations.

Based on the hypotheses, I designed a mixed-method research framework combining quantitative behavioral analysis with qualitative workflow validation.

Research → Insights → Opportunities → Prioritization → MVP → Architecture → Validation

I collaborated with the platform analytics team to review behavioral metrics such as review turnaround time, SLA adherence, and rejection rates. I analyzed historical trends to identify whether inefficiencies were isolated incidents or systemic patterns.

Quantitative Signals :

Average review turnaround time (e.g., 4.2 days)

SLA breach percentage (e.g., 18)

Metadata completion rate (e.g., 62%)

Rejection rate (e.g., 27%)

Re-routing frequency

Feature usage % (e.g., only 40% use structured tagging)

all about the user

User Research

A design only works when it understands. We used a mixed-method approach combining interviews, field observations, and system analysis to understand how citizens report issues and how authorities manage them.

Qualitative methods helped me understand the why behind user behavior through interviews, observation, and contextual inquiry.

Qualitative Signals :

Contributor interviews

Reviewer shadowing

Admin stakeholder sessions

Workflow observation

User Personas

Secondary Research

Personas representing enterprise employees, reviewers and admins navigating contribution, review, and approval workflows

Contributor

"I'm not sure what details are required, so I just upload something and hope it's right."

Click to Expand

Reviewer L1

"I spend most of my time correcting metadata and manually publish to KX site."

Click to Expand

Reviewer (L2)

"I get assets that should have been filtered out earlier & the publishing categories."

Click to Expand

Admin

"Routing assets manually takes up most of my day. All the docs are cluttered."

Click to Expand

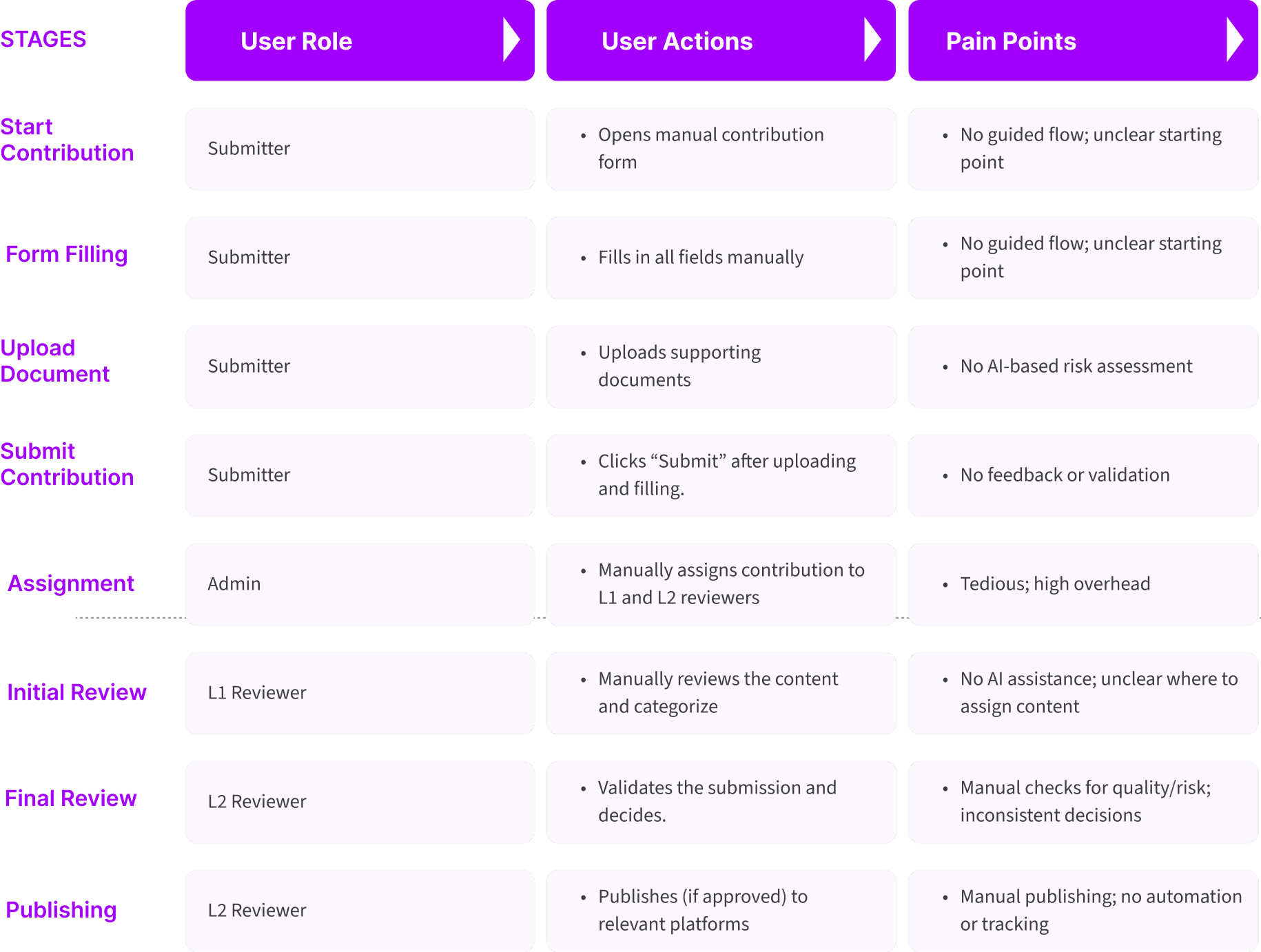

Pain Points

These are the core user pain points synthesized from behavioral patterns, secondary research, and complaint data. They represent the real obstacles employees face while trying to perform their specific task. These insights directly shaped the foundational design decisions in Contribute.

Contributors unsure of what qualifies as a "good contribution"

Reviewers manually fill metadata

L1/L2 roles unclear

Admins overloaded with routing

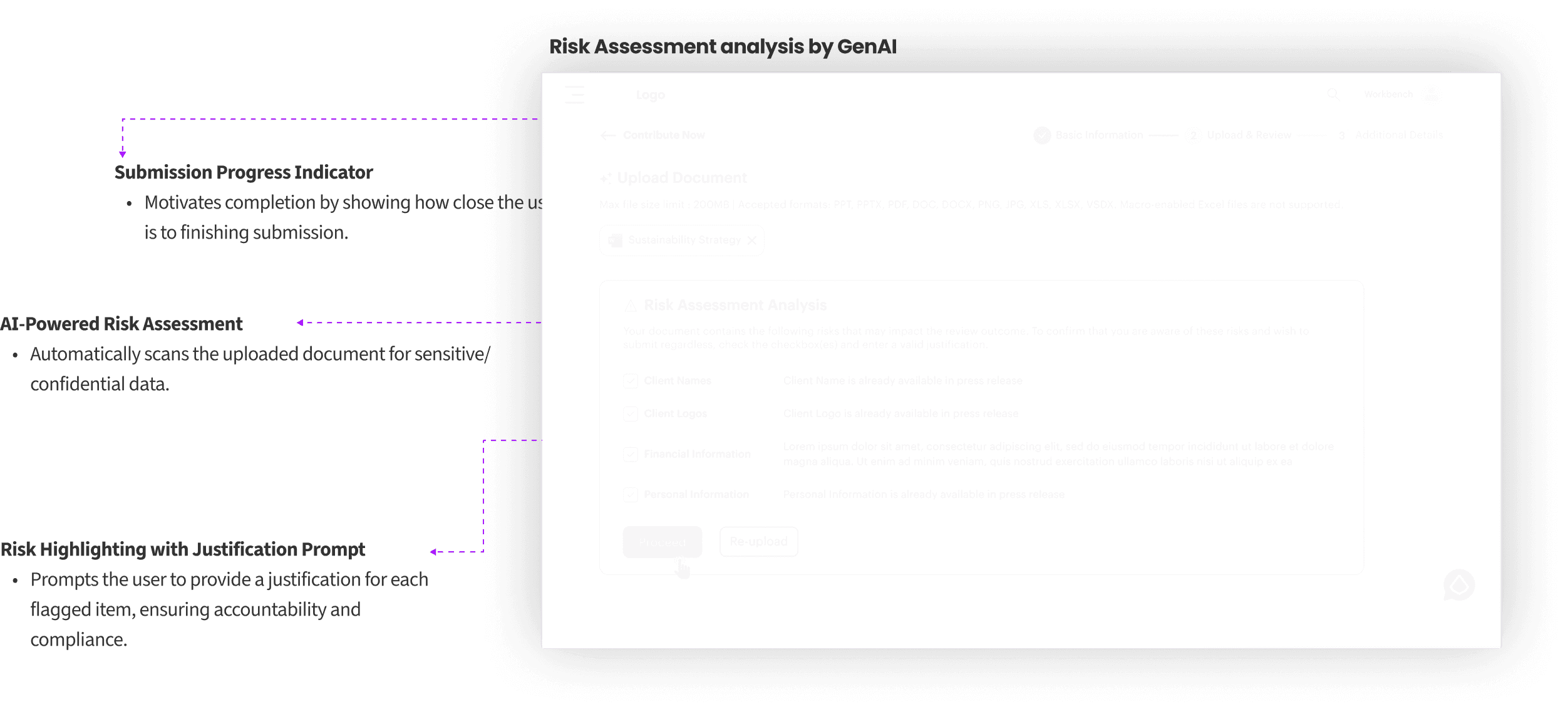

No visibility of risk or quality

Users want proof of resolution.

system patterns:

Insight Synthesis

After collecting quantitative and qualitative inputs, I synthesized findings using structured analysis frameworks to identify systemic root causes rather than surface-level pain points.

Frameworks used here:

Affinity Mapping

Behavioral Clustering

Workflow Breakdown Mapping

KPI Correlation - Root Cause Analysis

Drop-off & Friction Analysis

Below are the four main insights we got from the analysis.

The Problem was Structural, Not visual.

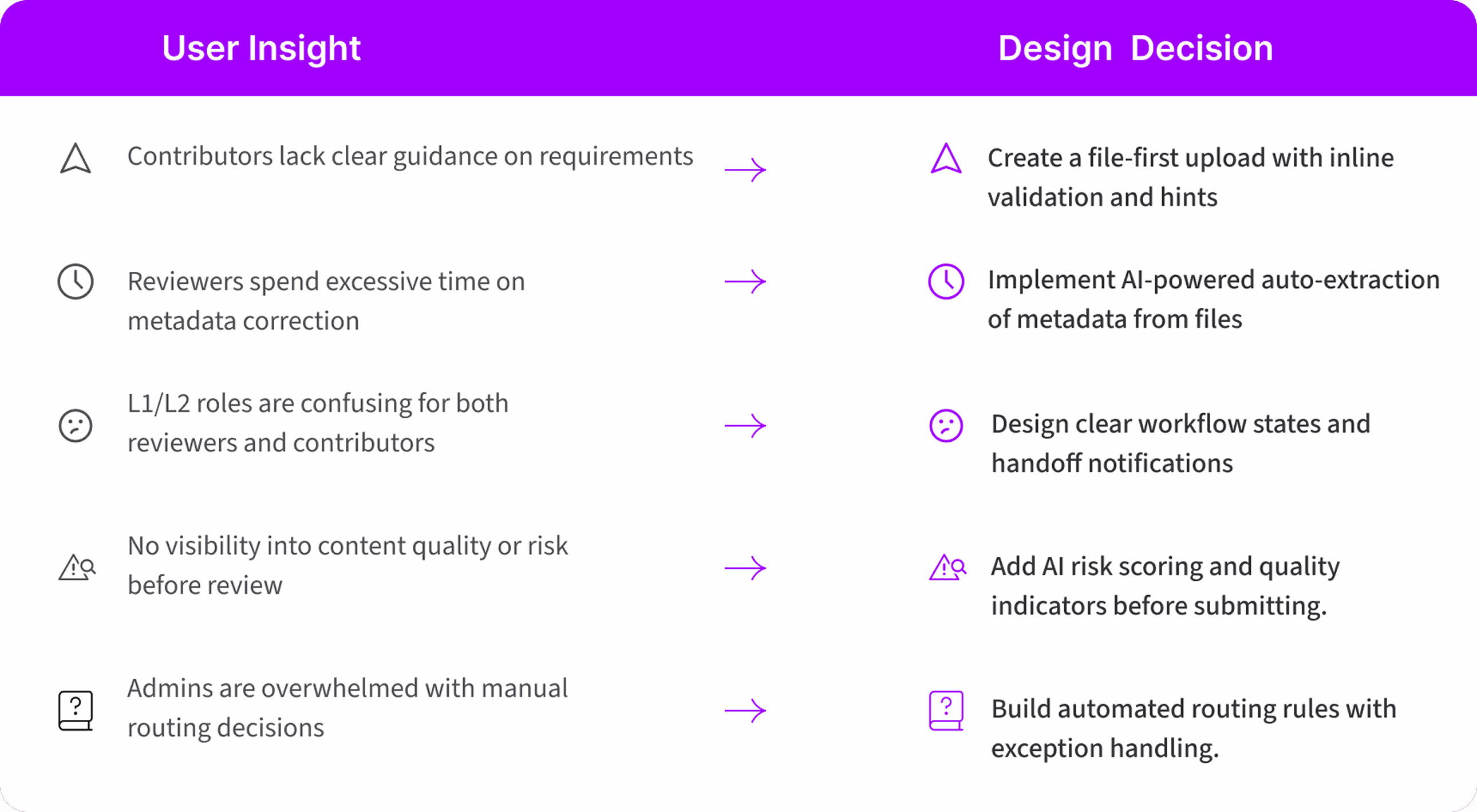

What User Needs?

From the user insights

1

Need:

Clarity

Solution:

Guided Flow

2

Need:

Less Manual Work

Solution:

Automation

3

Need:

Confidence

Solution:

AI risk score

4

Need:

Visibility

Solution:

Clear L1 →L2 checkpoints

As-Is User Journey

system patterns:

Opportunity Framing

The analysis uncovered high-impact opportunities to transform Contribute from a manual review system into a scalable, governance-driven knowledge platform.

Converting validated insights into clearly defined, strategic improvement areas aligned to business impact.

How might we..

Turning problem statements into opportunity directions.

How might we make contributions error-free?

How might we support reviewers with automation?

How might we ensure quality at the source?

How might we streamline multi-stage reviews?

Insight → Design

How research findings directly informed design decisions

system patterns:

Feature Prioritization

The analysis uncovered high-impact opportunities to transform Contribute from a manual review system into a scalable, governance-driven knowledge platform.

Converting validated insights into clearly defined, strategic improvement areas aligned to business impact.

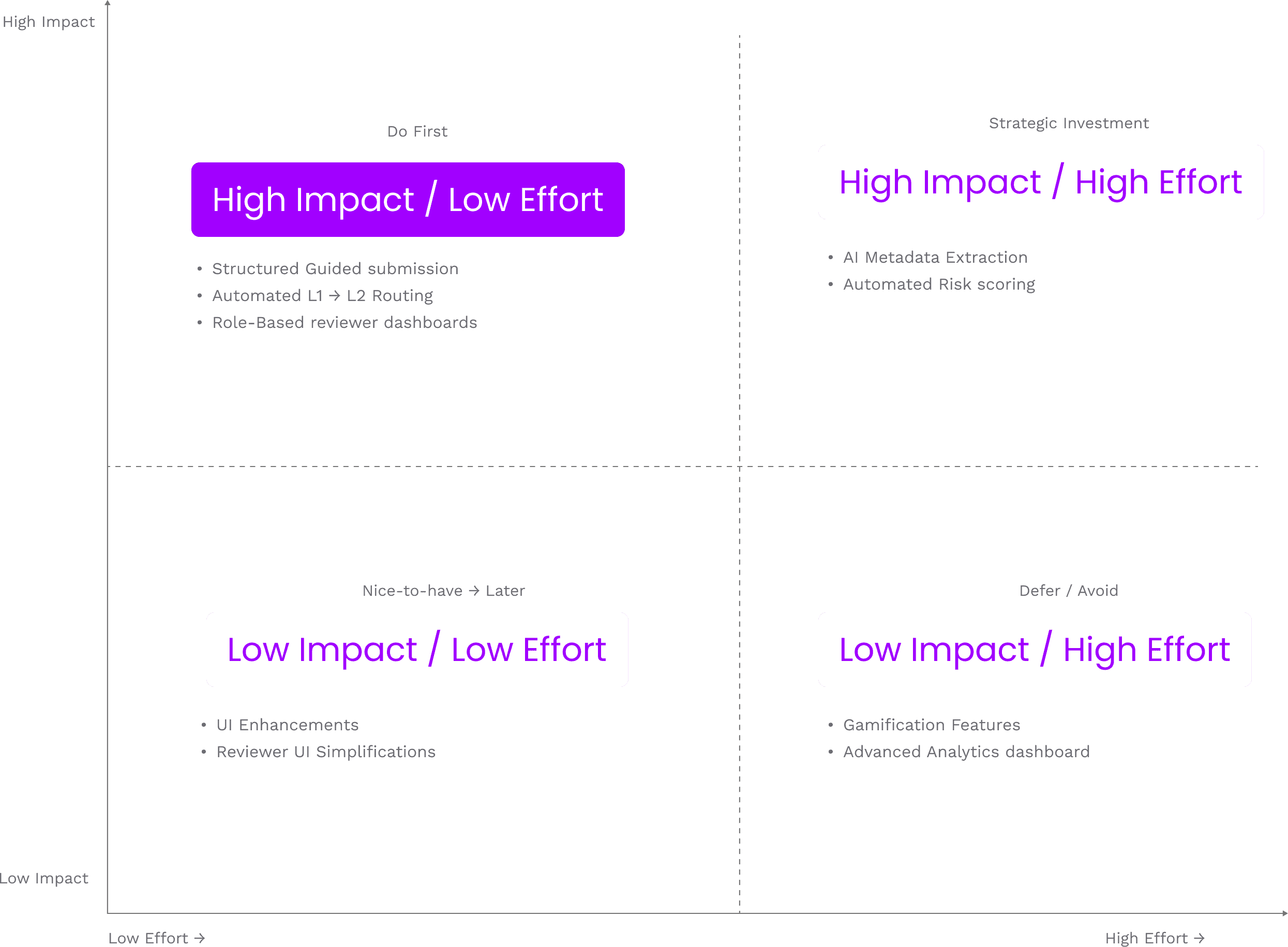

Impact Vs Effort Matrix

This is a decision matrix for feature prioritization

Impact - Effort helped us quickly identify high-value, low-complexity opportunities that could improve KPIs with minimal resource strain.

So basically, when each insight or feature is taken, we calculate the impact by using the below four, so we calculate if each feature is satisfying the below four by considering the north star metric ultimately, is it satisfying?

Impact =

Reduction in review time

Reduction in manual intervention

Improvement in metadata accuracy

Reduction in rework cycles

and then we calculate the time/ effort that this features takes to complete

It includes:

Engineering complexity

Data dependency

AI training requirement

Integration cost

Risk level

Timeline

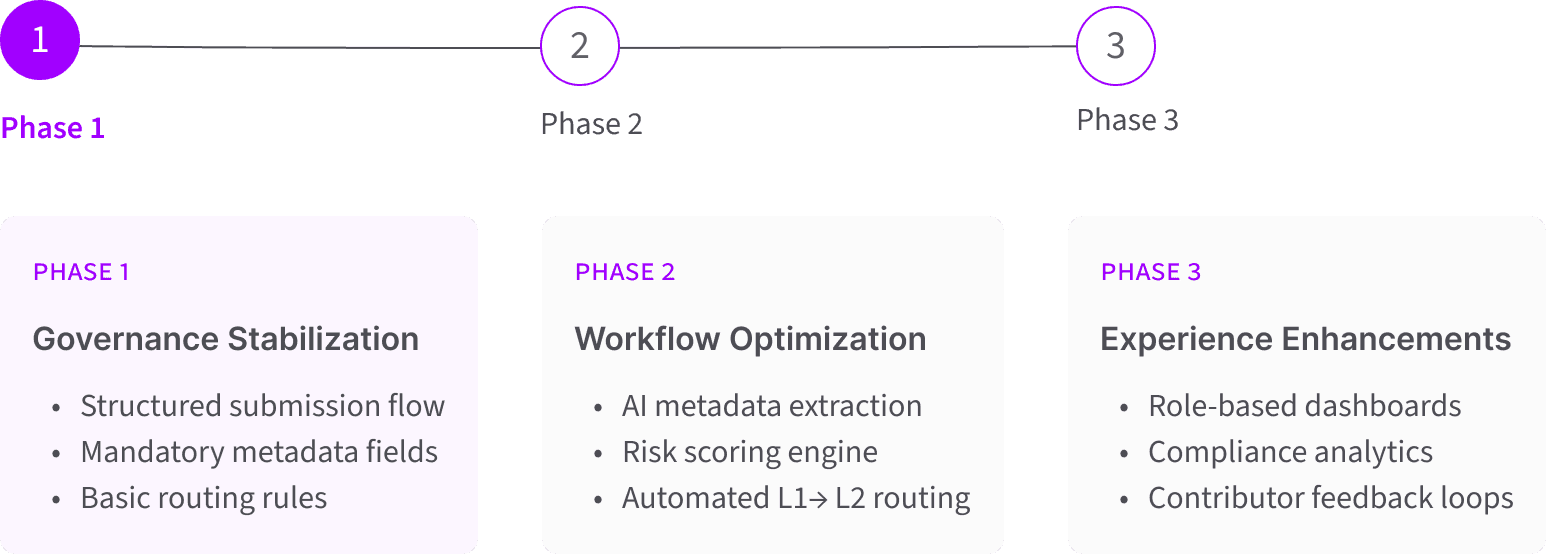

MVP & Roadmap Phasing

Value Rollout Plan

Based on prioritization and governance risk assessment, the MVP focused on stabilizing core workflow efficiency and metadata integrity before introducing advanced automation layers

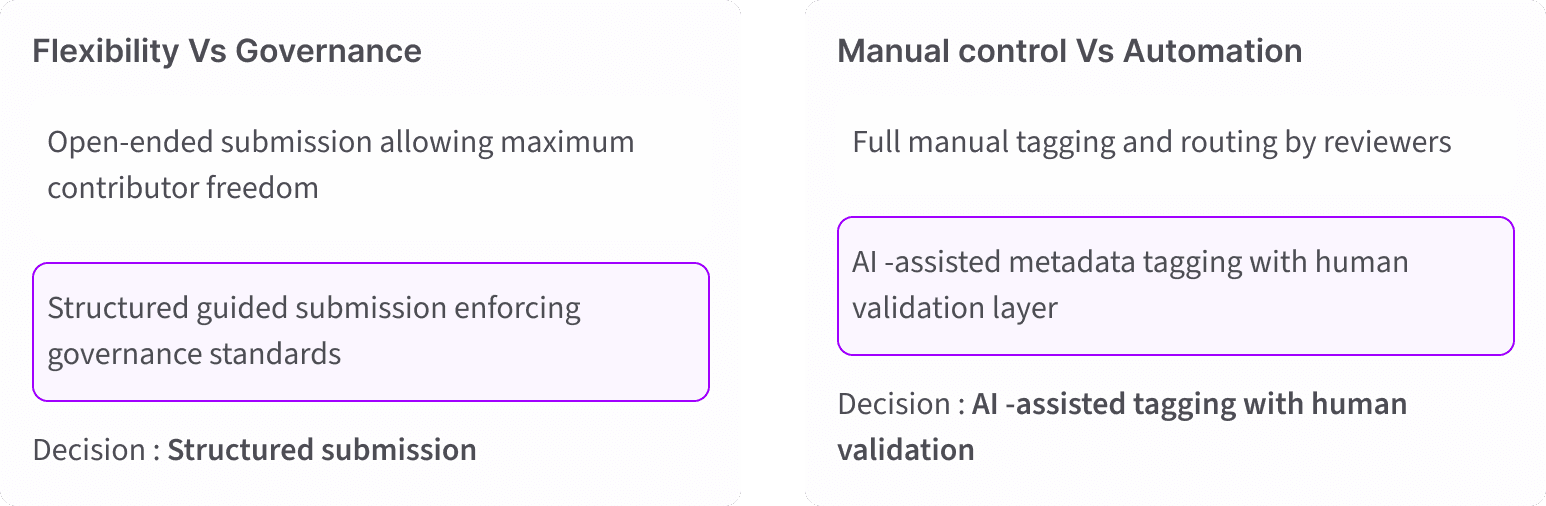

Trade-Off Analysis

Governance Trade-Offs

Based on prioritization and governance risk assessment, the MVP focused on stabilizing core workflow efficiency and metadata integrity before introducing advanced automation layers

Redefining Problem Statement

Enterprise contributors need a guided, efficient way to submit high-quality knowledge assets because the current manual process creates inconsistencies, delays, and reviewer burnout. A successful solution will reduce submission errors, automate metadata extraction, and provide clear quality signals throughout the review workflow.

Final Structure

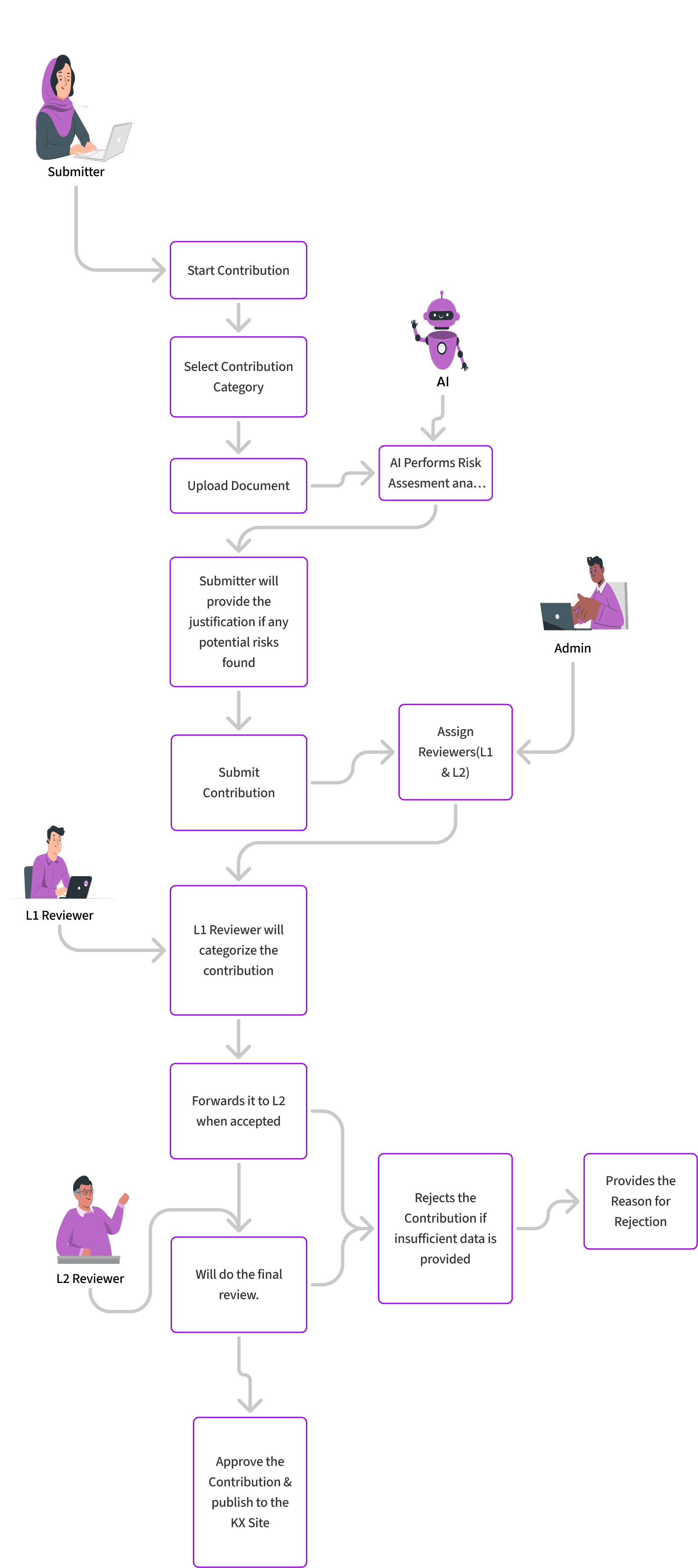

Mapping the new contribution flow

Easy, guided process for submitting content with minimal friction.

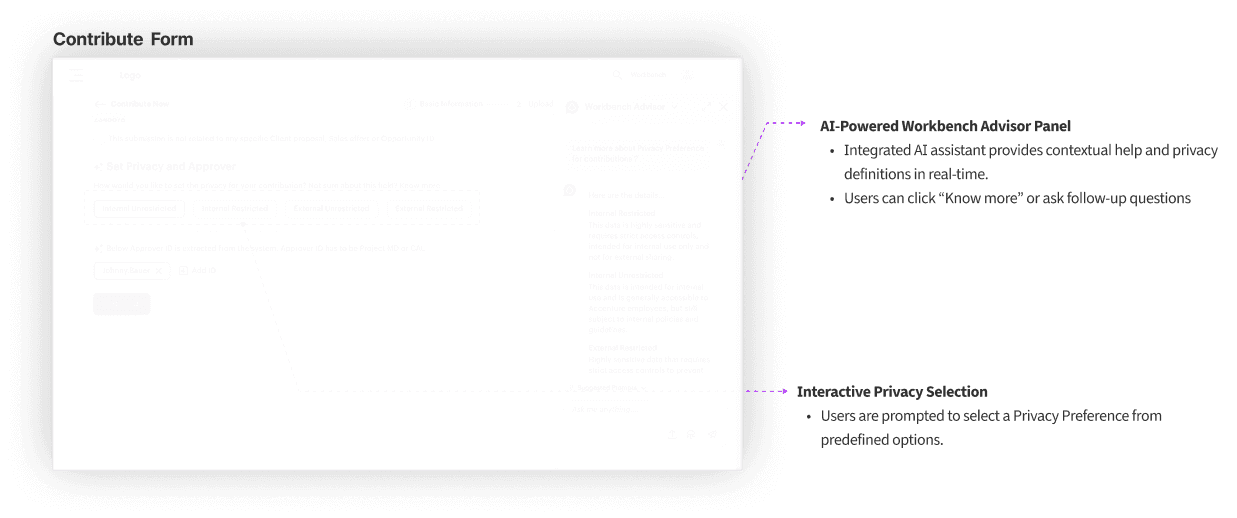

Automated quality checks, risk assessments, and improvement suggestions to support better contributions.

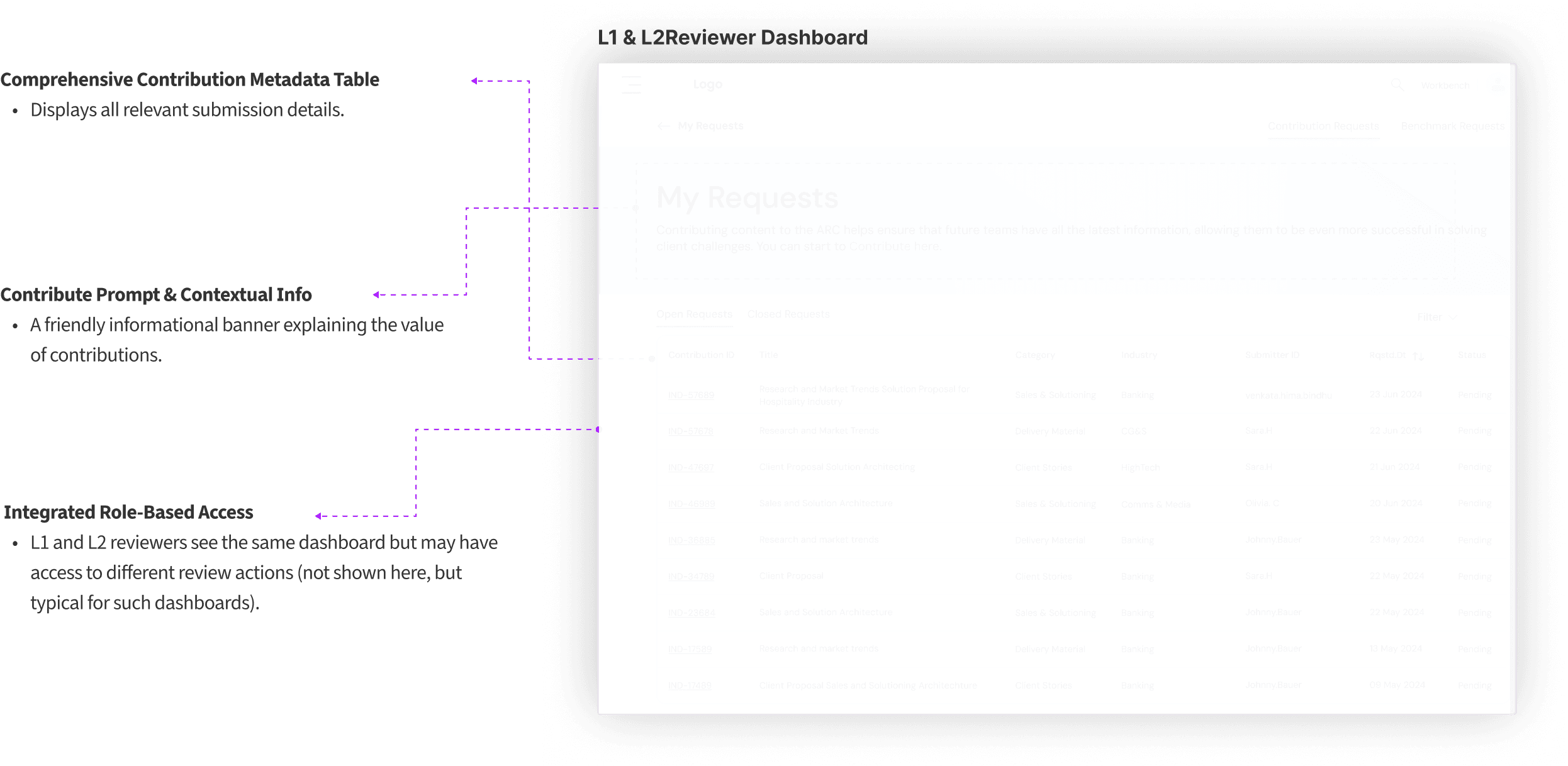

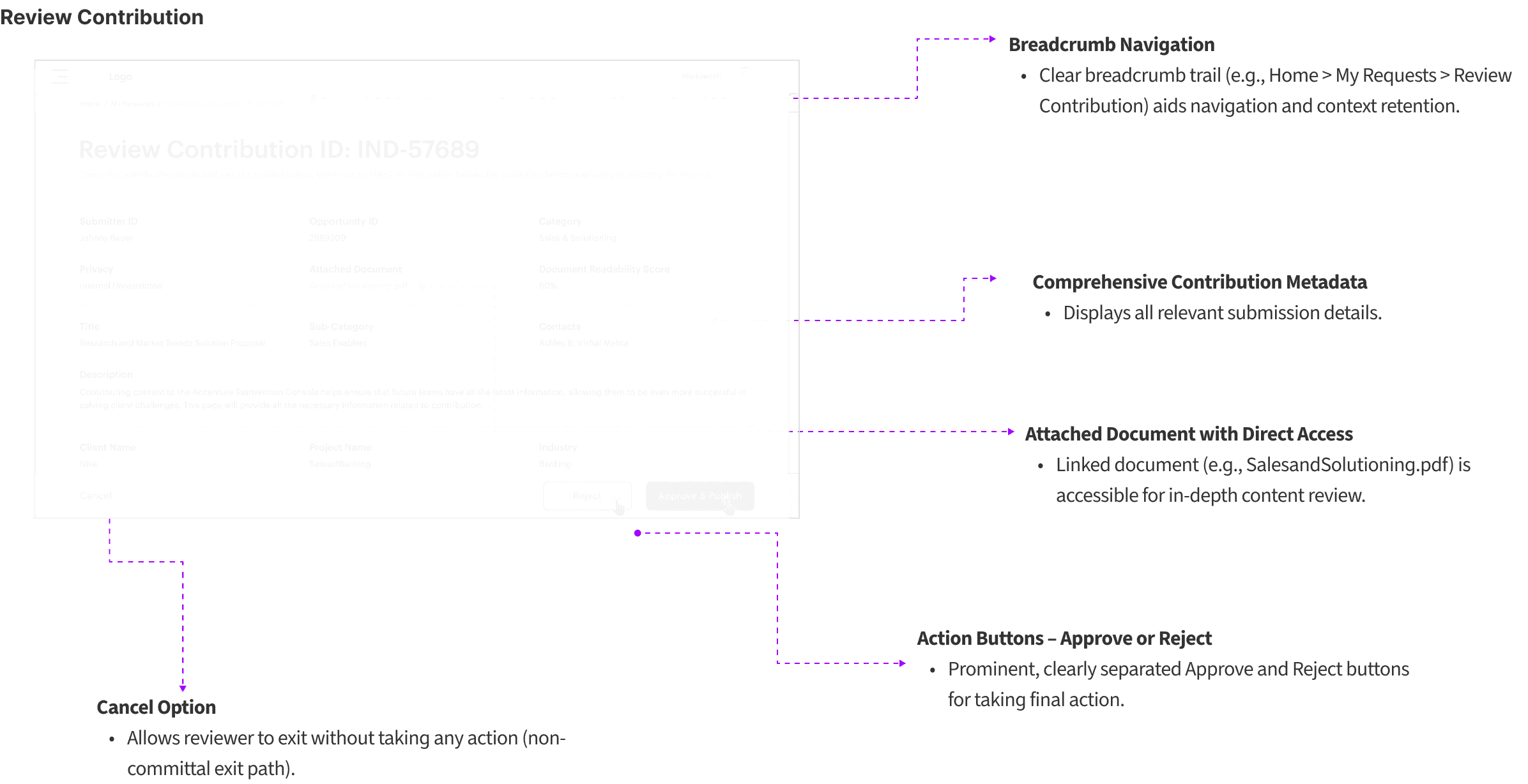

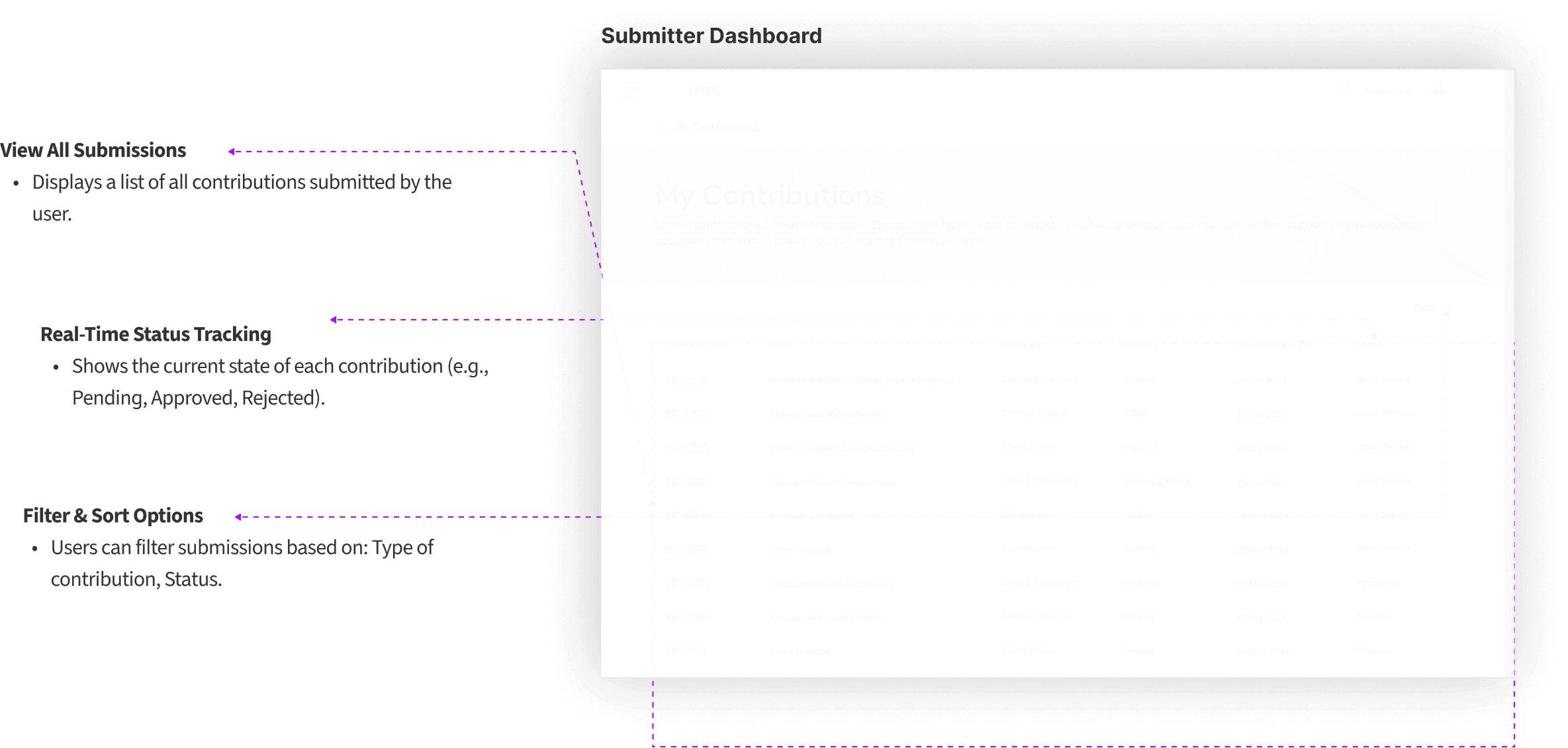

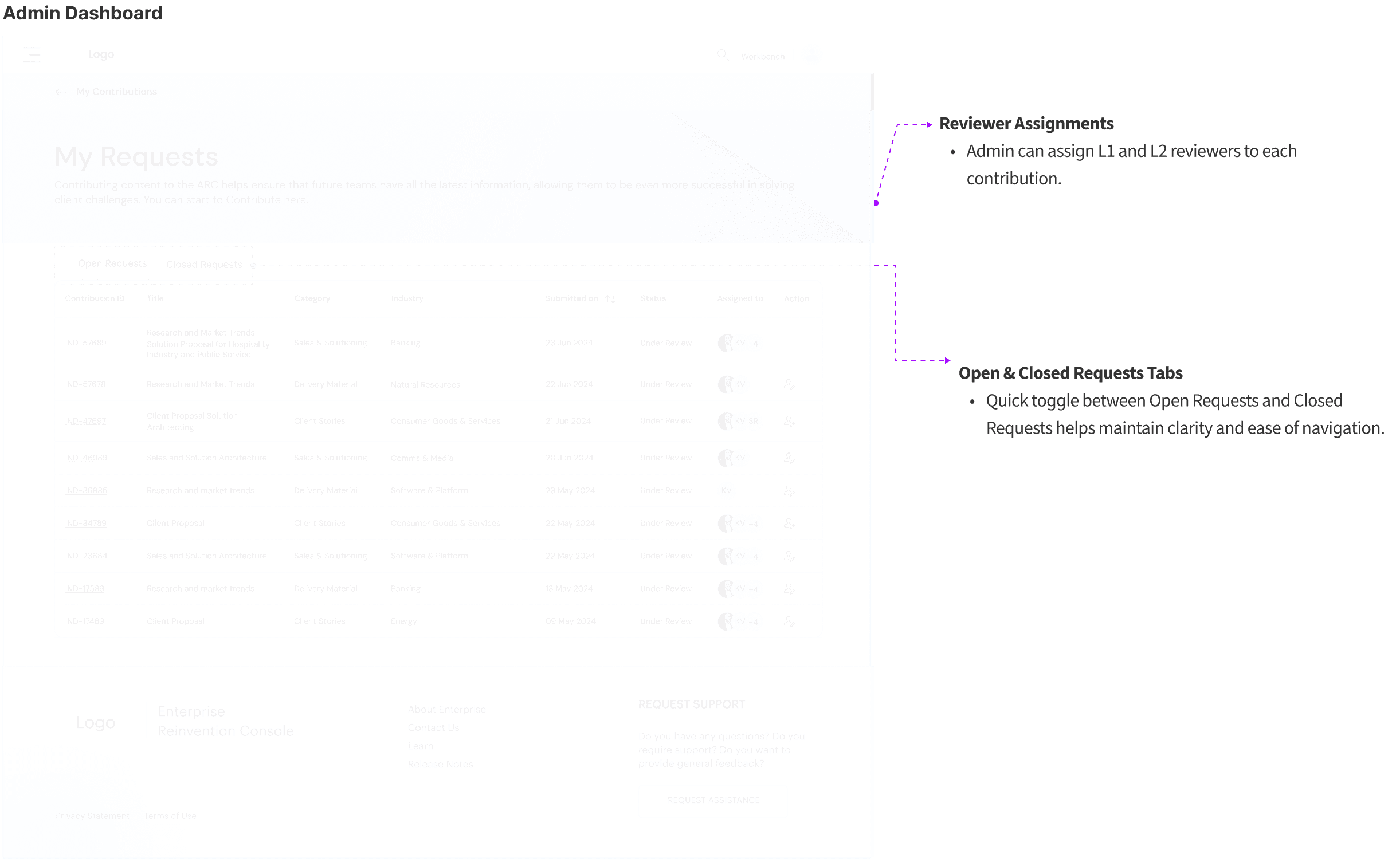

Personalized views for Submitters, Reviewers (L1 & L2), and Admins to manage their tasks effectively.

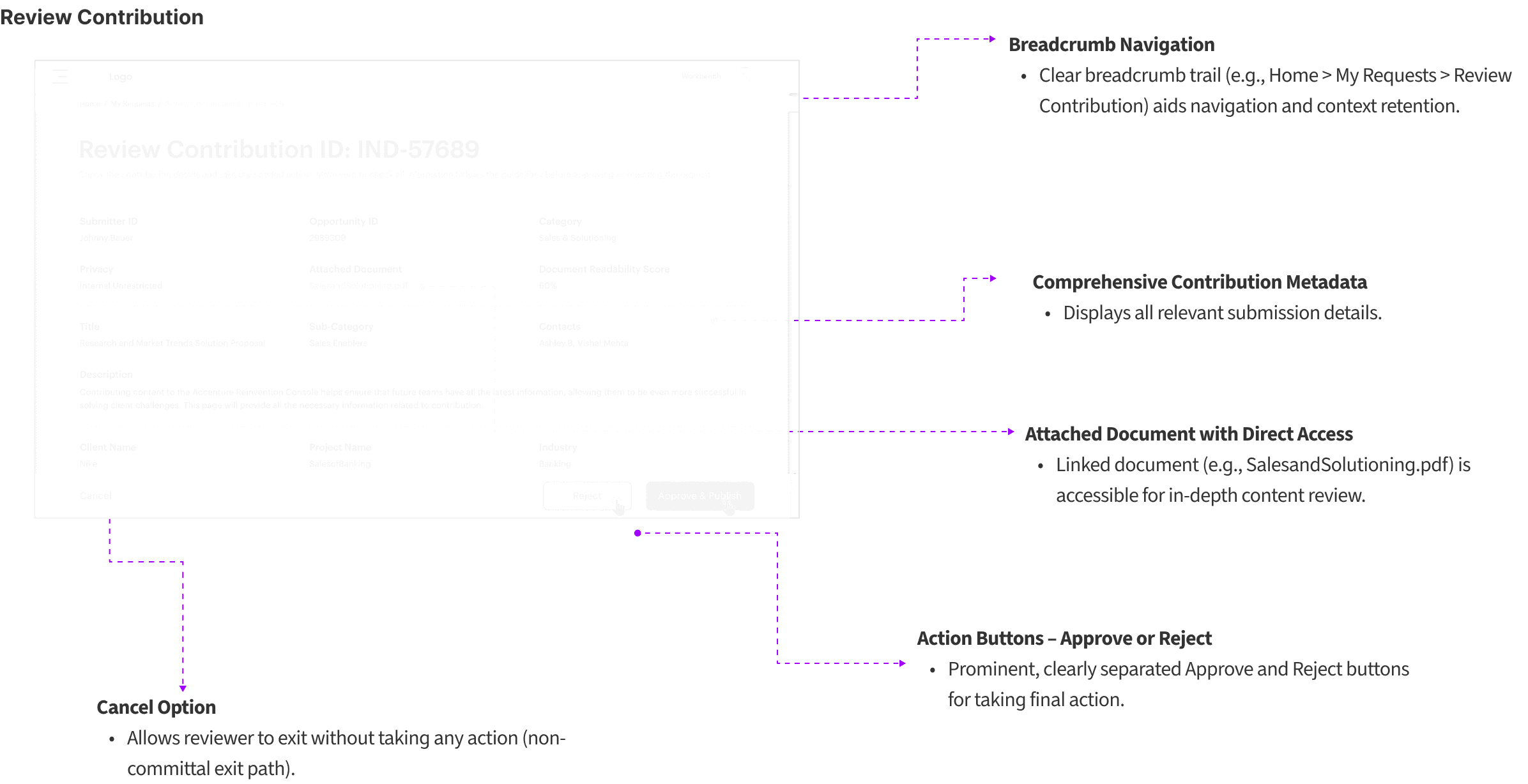

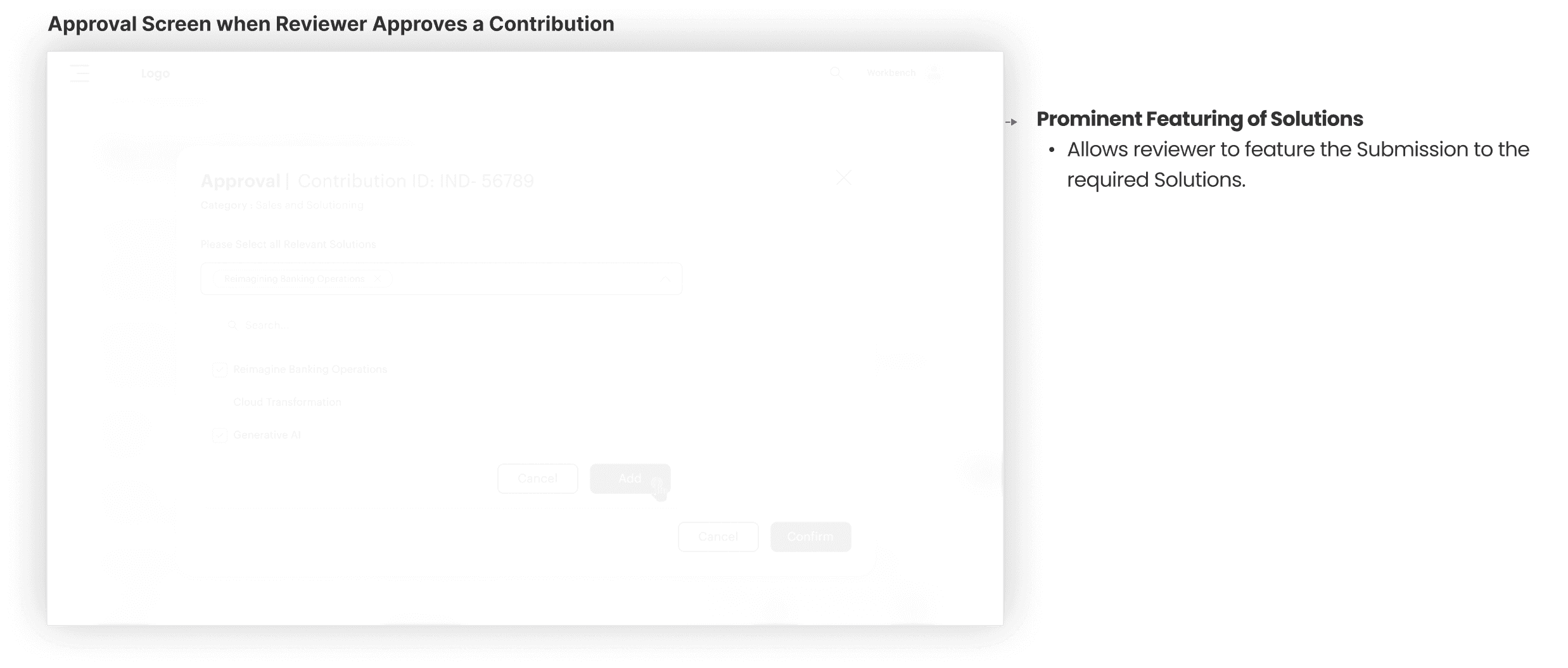

Seamless handoff between L1 and L2 reviewers with tools to approve, reject, or escalate contributions.

design approach :

Starting the design

In the Design phase, I translated insights into structured workflows, reimagined the contribution journey, and crafted a more intuitive file-first experience. I explored multiple variations, iterated through wireframes, and refined interactions to ensure contributors, reviewers, and admins each had a seamless, guided path.

User Flow

The To-Be journey outlines the redesigned, streamlined contribution workflow empowered by automation, clarity, and AI-assisted guidance.

Digital Wireframes

Before jumping into visual details, I created digital wireframes to map the structure and flow of the app. These helped me validate layout decisions early and make sure the experience stays simple and intuitive.

below are some of the wireframes that we have used for Janah

Visual layouts

Visual Design

Before jumping into visual details, I created digital wireframes to map the structure and flow of the app. These helped me validate layout decisions early and make sure the experience stays simple and intuitive.

below are some of the wireframes that we have used for Contribute.

Usability Testing

Testing Overview

PARTICIPANTS

8 stakeholders across roles

METHOD

Remote moderated sessions

FOCUS AREAS

Upload flow, review process, dashboard

DURATION

30 - 40 min per person

Key Findings

→ Added inline tooltips and example values

Users needed clearer metadata hints

→ Implemented multi-select with batch operations

Reviewer dashboard needed bulk actions

→ Redesigned with traffic light system + numeric scale

AI risk score needed color clarity

→ Added structured feedback templates

L2 reviewers needed "reason for rejection"

what changed :

Outcome

Introduced a file-first, guided contribution flow that reduced confusion and submission errors.

Improved Submission Quality

Contributors are guided through a structured, file-first submission and AI-assisted metadata

Enabled Clear role-based workflows

Prevents bottlenecks and accountability gaps at scale.

Enabled L1→L2 faster reviews

Faster handoffs with clear workflows

Made the system usable at enterprise scale.

Reviewers can bulk review, filter, and prioritize submissions

Takeaways

Projected Improvements

What I Learned

1

Enterprise UX is about role clarity, not just visuals

2

AI must support, not replace, decision-making

3

Metadata structure defines discoverability

4

Review workflows require empathy + efficiency balance

Next Steps

1

Pilot rollout with select contributor groups

2

Bulk actions expansion for high-volume reviewers

3

Integration of advanced AI quality scoring

4

Additional analytics dashboards for admins

Next Steps

1

Conduct follow-up usability testing on the new app iteration.

2

Identify any additional areas of need and ideate on new features.