Designing Trust in Multi-agent workflow

Making Civic Issues Visible, Trackable, and Accountable

Region

Hyderabad, Telangana

Year

2025

Product Type

AI-powered enterprise Workflow Platform

Industry

Enterprise SAAS. AI / GenAI. Knowledge Management.Productivity Tech

The project itself :

Project Overview

Modern organizations use AI systems to automatically detect security threats such as phishing messages. While these systems are powerful, trust is fragile.

In many organizations, an AI-powered security engine flags suspicious messages. Internally, these alerts are validated across multiple automated agents. However, users only see raw JSON logs leaving them confused about why decisions were made.

This project focuses on designing a trust-first decision system, where AI insights are validated, explained, governed by business rules, and finally approved by humans before any action is taken.

Problem:

Many B2B SaaS companies use AI to predict customer churn, but these predictions often appear as opaque scores or alerts with little explanation. Business teams struggle to understand why a customer was flagged, how confident the system is, or whether immediate action is necessary. As a result, AI insights are either blindly followed or completely ignored, leading to poor decision-making and lost revenue.

Goal:

The goal of this project was to design a trust-first decision system that helps business teams understand, evaluate, and act on AI-generated churn insights with confidence. The system aims to make AI reasoning transparent, apply business rules visibly, and ensure humans remain accountable for final decisions.

My role:

Product Designer (End-to-End), supporting GenAI workflow integration.

Responsibilities:

Defined problem & scope

Designed AI trust flow,

Done secondary research,

Created dashboard UI,

Defined human decision states,

Documented design rationale.

Background Insight:

Historical Context

Traditionally, churn prevention relied on manual tracking, CRM notes, and reactive customer outreach. With the rise of AI, predictive models began identifying churn risks earlier, but interface design did not evolve at the same pace. Most systems display predictions without context, creating a gap between AI capability and human trust.

Background Insight:

Scope

This was a conceptual systems design project focused on exploring interaction patterns and decision flows. Due to the exploratory nature of the problem and lack of access to real enterprise users, the project relied entirely on secondary research and analytical reasoning rather than direct user interviews.

Regional Insight:

Contextual Research

Industry research shows that lack of explainability and trust is a major barrier to AI adoption in enterprise contexts. According to McKinsey, 40% of organizations identify explainability as a key risk in AI use, yet few are actively mitigating it.

This project relied on secondary research, including industry reports from consulting firms (McKinsey, IBM, Gartner), Enterprise AI adoption studies, Articles on explainable AI and human-in-the-loop systems

Key Insights:

Based on the research, four recurring themes emerged across industries:

Lack of trust in AI decisions

Poor explainability of AI outputs

Unclear human accountability

Misalignment between AI insights and business rules

These themes directly informed the design direction of the project.

References:

all about the user

User Research

Because this project addresses internal enterprise decision systems, access to real users and proprietary workflows was not feasible. Instead of simulating interviews or assumptions, the project focused on synthesizing well-documented industry findings and applying systems-level reasoning to propose a robust design solution.

This approach mirrors early-stage product discovery and conceptual design work commonly done in enterprise environments.

As-Is User Journey

Secondary Research

How AI insights are handled today

1

AI Flags risk

Pain Point

2

User sees a score without context.

Pain Point

3

User hesitates or ignores insight.

Pain Point

4

No clarity if action worked.

Pain Point

How might we?

Turning problem statements into opportunity directions.

How might we design an AI decision system that:

1

Explains reasoning in human language

2

Shows confidence and uncertainty clearly

3

Applies business rules transparently

4

Keeps humans accountable for final decisions

Pain Points

Based on the secondary reasearch and industry insights, these pain points highlights why teams struggle to trust and act on AI-generated insights, not because AI is inaccurate, but because it's decision process is difficult to understand and control.

AI decisions feels like a black box

Lack of explainability reduces trust

Technical logs are not human readable.

Unclear confidence leads to hesitation.

Fear of wrong action increases aversion.

No clear handoff to human judgement

Regional Insight:

Assumptions

Since this was a concept project, the following assumptions were made:

Key Assumptions:

AI predictions already exist and are reasonably accurate

Business users are non-technical but accountable

Decisions have financial or customer impact

Human review is necessary for high-risk cases

These assumptions were validated against industry research.

References:

The Solution

Turning problem statements into opportunity directions.

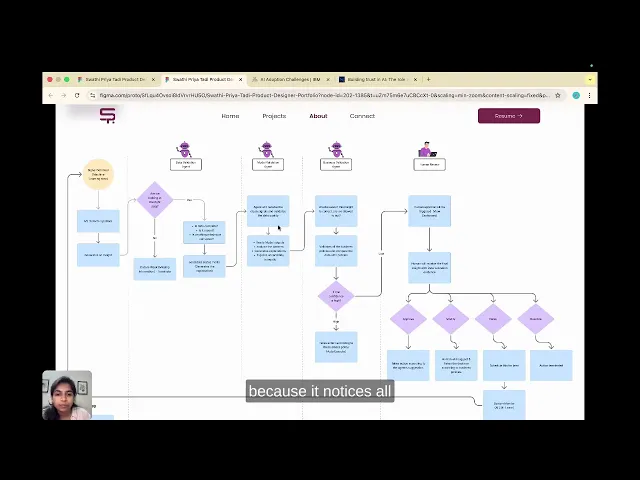

The proposed solution is an AI Insight → Trusted Decision Flow, where insights are validated step-by-step through data checks, reasoning, policy enforcement, and human review before any action is taken.

Trust Chain Concept

Each AI "agent" is represented as a validation layer in a visible sequence. Agents are not shown as bots or models, but as human-readable checks:

1

Data Analysis

Pattern matching & historical data

2

Model Confidence

AI classifier evaluation

3

Policy Check

Internal security rules

4

Human Review

Manual verification option

Trust is not binary. It is built step-by-step. Instead of showing a final verdict, we need to show a chain of trust.

User Flow

Information Architechture

The design system uses semantic colors, clear status indicators, to communicate trust levels at a glance.

Visual Design system

The design system uses semantic colors, clear status indicators, to communicate trust levels at a glance.

design approach :

Starting the design

The visual design focuses on reducing cognitive load and conveying trust in AI decisions through calm layouts, clear hierarchy, and semantic visual cues.

what changed :

Outcome

Designed a trust-first AI decision system that makes churn predictions explainable, reviewable, and human-controlled.

Transformed opaque AI alerts into structured insights that support confident business action.

Takeaways

A civic-tech identity built for clarity, trust, and accessibility.

“Since Janaḥ has not yet been deployed, the following impact metrics are projections based on research, competitive benchmarking, and usability test performance.”

What I Learned

1

Trust is emotional, not (technical) accuracy alone

2

Visibility builds confidence

3

Human-in-the-loop design increases accountability without slowing workflows.

4

Trust is built through clarity, not magic.

5

Systems thinking > UI Polish.

Next Steps

1

Validate the flow with Customer Success teams through usability testing.

2

Explore adaptive thresholds for when human review is required.

3

Usage analytics.

4

Extend the trust framework to other AI insights beyond churn.

Next Steps

1

Validate the trust flow with real customer success and operations teams.

2

Explore deeper agent collaboration and automated confidence thresholds.